At 11:23 on a Tuesday night, a 34-year-old warehouse supervisor in Columbus, Ohio hit “submit” on a personal loan application — $18,500 to consolidate three credit cards — and got a decision in under four minutes. No phone call. No loan officer. No waiting until the branch opened Thursday morning. Just a number on a screen and a funding timeline.

That story would have sounded like science fiction in 2019. In 2026, it’s a Tuesday.

But here’s where most coverage of AI loan approval gets it wrong: the conversation keeps centering on speed. How fast the decision comes. How seamless the experience feels. That’s not the interesting part. The interesting part — the part that actually matters to borrowers, regulators, and anyone who’s ever been on the wrong side of a credit decision — is what the machine is actually evaluating, and whether it’s doing it fairly. Speed is a feature. The model underneath is the product.

1. The Data Inputs Have Expanded Way Beyond Your Credit Score

Traditional underwriting ran on a fairly short checklist: FICO score, debt-to-income ratio, employment history, maybe a few years of tax returns. That was the whole picture. A lender looked at roughly the same five signals whether you were a 28-year-old freelance designer or a 52-year-old dentist with a paid-off house.

Modern AI underwriting models — the ones deployed by larger online lenders and credit unions experimenting with automated decisioning — pull from a significantly wider pool. We’re talking about:

- Bank account cash flow patterns (not just balance, but the consistency and frequency of income deposits)

- Rent payment history, which historically never showed up in credit files

- Utility payment data, where available through bureau-connected services

- Length and stability of the applicant’s email address and phone number — a soft signal for identity stability

- Device and behavioral metadata during the application itself (time spent on each field, how many times a form was corrected)

That last one surprises people. Some lenders’ models have flagged that applicants who copy-paste income figures — rather than type them — show slightly different default patterns. Whether that’s signal or noise is genuinely debated. But it illustrates how far the data perimeter has expanded.

The Consumer Financial Protection Bureau has been watching this closely. Without citing specific pending rules, the general regulatory posture in 2026 is that lenders using alternative data must be able to explain adverse actions with specificity — “our model scored you lower” is not a legally sufficient denial reason. That tension between model complexity and explainability requirements is one of the live fault lines in the industry right now.

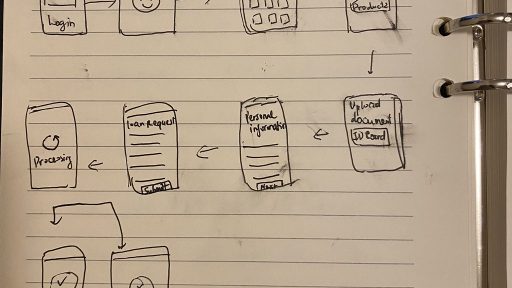

2. How the Decisioning Engine Actually Runs

Here’s a simplified version of what happens in those four minutes:

Step one: identity and fraud check. The system verifies that you are who you say you are, cross-referencing submitted information against identity databases, device signals, and fraud consortium data. This alone used to take 24 hours when done manually.

Step two: bureau pull and score calculation. A hard or soft inquiry hits one or more of the major credit bureaus. The raw score is ingested, but in many modern systems it’s just one input among dozens — not the deciding factor it once was.

Step three: alternative data enrichment. If you’ve linked a bank account (increasingly common, driven by open banking adoption), the model analyzes your cash flow. A person with a 620 FICO but 18 consecutive months of steady direct deposits and no overdrafts looks very different to a well-trained model than someone with the same score and irregular income.

Step four: risk tiering and pricing. The model doesn’t just output approve/deny — it outputs a risk tier, which determines your interest rate. This is where two applicants with similar profiles can end up with rates that differ by 4 or 5 percentage points, based on signals neither of them knew were being evaluated.

Step five: compliance review. Automated rules check the decision against fair lending requirements. Any pattern suggesting disparate impact on a protected class is supposed to trigger a review. In practice, how robustly this runs varies significantly by institution.

3. A Real Application, With the Messy Parts Included

Last spring, a friend — mid-40s, self-employed photographer, good income but irregular deposits — applied for a $12,000 home improvement loan through an online lender. Her FICO was 694. Solid, not spectacular.

The first application came back denied in six minutes. Reason given: “income verification insufficient.” She hadn’t linked her bank account — she’d uploaded PDF bank statements instead. The model, apparently, weighted the linked-account cash flow data heavily enough that the static document upload triggered a lower confidence score.

She reapplied two days later, this time connecting her checking account directly. Approved. Rate: 11.4%, 36-month term. The funding hit in two business days.

The system worked — eventually. But she almost didn’t reapply. She assumed the denial was final. A lot of people do. That’s a real failure mode that speed and automation don’t solve. If anything, a fast denial feels more authoritative than a slow one. It doesn’t invite you to ask questions.

4. What Doesn’t Work — And Why People Keep Doing It Anyway

There are a few popular strategies for navigating AI-powered loan approvals that are either outdated or just wrong.

Applying to five lenders at once to “see who gives the best rate.” Multiple hard inquiries in a short window do get bucketed together by the bureaus — usually within a 14 to 45-day window depending on the scoring model — but this only applies cleanly to mortgage and auto loan shopping. For personal loans and credit cards, the treatment is less consistent across models, and some lenders’ internal systems flag multiple recent inquiries as a risk signal regardless. Rate shopping is smart. Scattershot applications are not.

Paying down one card to zero right before applying. Credit utilization does matter, and reducing it can lift your score in the short term. But if an AI model is also analyzing your cash flow and the timing looks like you depleted savings to game the application, that’s a different signal. Gaming the score without understanding the full model is playing chess while the opponent is playing a different game entirely.

Assuming a denial is permanent. As the example above shows, a denial is often a model’s response to a specific data gap, not a judgment about your overall creditworthiness. Calling the lender — yes, actually calling — and asking which specific input drove the denial is underused and surprisingly effective at some institutions.

Trusting “soft pull pre-qualification” as a reliable preview of your actual rate. Pre-qualification tools use simplified models. The full underwriting model runs on more data. The pre-qual rate and the actual rate can differ by several percentage points once income verification and alternative data are applied. It’s a directional signal, not a commitment.

5. The Fairness Problem Isn’t Going Away

Industry research — including work published by academic researchers studying automated credit decisions — has consistently found that machine learning models can reproduce and sometimes amplify historical lending disparities, even without explicitly using race or zip code as inputs. Proxies for those variables exist throughout the data: certain spending patterns, certain income deposit structures, certain gaps in credit file length that correlate with demographic groups that were historically excluded from credit access.

Some lenders have made genuine investments in fairness-aware model design. Others have not. The honest answer is that as a borrower, you largely can’t tell which kind of lender you’re dealing with from the outside.

What you can do: if you receive an adverse action notice, you have a legal right to a specific explanation. Push for it. If the explanation is vague, that’s worth noting — and, depending on the situation, worth escalating to your state’s banking regulator or the CFPB’s complaint portal, which remains an active and logged channel.

6. What Actually Improves Your Position in an AI-Reviewed Application

None of this is about tricking the system. It’s about understanding what the system is actually measuring and presenting accurate information in the format it’s designed to read.

- Link your bank account when prompted. Cash flow data benefits applicants with thin credit files or irregular income far more than it hurts anyone. Opting out because it feels intrusive often just reduces the richness of your file.

- Stabilize your income picture six months before a major loan application. Consistent deposit patterns over a meaningful period carry weight. A spike of income the month before you apply reads differently than a consistent baseline.

- Check for errors in your credit file before applying. The three major bureaus — Equifax, Experian, and TransUnion — each allow free annual reports through the federally mandated access point. Errors in credit files are more common than most people expect, and disputing them is a slower process than people want it to be.

- Treat the first denial as a question, not an answer. Ask what drove it. Reapply with the gap addressed if possible. The model is looking for specific signals — sometimes the fix is straightforward.

Start Here This Week

You don’t need to overhaul your finances to start engaging with this more intelligently. Three small things:

Pull your credit report today. Not a score — the actual report, line by line. Look for accounts you don’t recognize or payment history that’s marked incorrectly. This takes about 20 minutes and costs nothing.

Write down the last three months of your income deposits. Dates, amounts, sources. That’s your cash flow story — the same story an AI model would read from your bank account. Seeing it clearly helps you understand how a model might read it.

If you were recently denied credit anywhere, request the full adverse action explanation in writing. You’re entitled to it. Read it carefully. The specific language often tells you exactly which variable to address before your next application.

The machine is faster than it’s ever been. That doesn’t make it smarter than someone who understands what it’s looking for.